Between October 31st and November 4th, the annual conference on Empirical Methods in Natural Language Processing (EMNLP 2018) took place in Brussels, Belgium. Webinterpret was present at the conference and participated in the 3rd Conference on Machine Translation (WMT 2018) on the problem of filtering a parallel corpus, held at the same venue.

Parallel corpus filtering tackles the problem of cleaning noisy parallel corpora. A parallel corpus is a large collection of sentences and their translations. The automatic pairing of translations with their source sentence is not a perfect process and sentences can be paired incorrectly. The crux of the problem is how to identify such noisy examples and remove them from the corpus.

At this particular conference, the organizers provide a very noisy 1 billion word German–English corpus crawled from the web and ask participants to select a subset of sentence pairs that amount to (a) 100 million words, and (b) 10 million words. The quality of the resulting subsets is determined by statistical and neural Machine Translation (MT) systems trained on the selected data. The quality of the translation systems is measured by computing the BLEU score on the (a) official WMT 2018 new translation test set and (b) another undisclosed test set.

The raw parallel corpus provided exhibits noise of all kinds (wrong language in source and target, sentence pairs that are not translations of each other, bad language, incomplete or bad translations, etc.). We address this problem under the framework of machine learning where the goal is to estimate to what extent a pair of sentences in two languages correspond and, therefore, can be considered translations of each other.

Given a pair of sentences, we first compute a set of features indicating to what extent the sentences correspond to each other. At this stage, we consider three different types of feature:

-

Adequacy features measure how much of the meaning of the original is expressed in the translation and vice versa. We use probabilistic lexicons with different formulations of word alignment to estimate the extent to which the words in the original and translated sentences correspond to each other.

-

Fluency features aim at capturing if the sentences are well-formed grammatically, contain correct spellings, adhere to common use of terms, titles and names, are intuitively acceptable and can be sensibly interpreted by a native speaker. We use two different features, both based on language models.

-

Shape features measure the mismatch between the frequency of different tokens from each sentence in the pair. They can be seen as an extension of adequacy features with a particular focus on the form of the words in the sentences.

In total we use 48 features: 4 adequacy, 4 fluency, and 40 shape features.

Then, these features are used to predict a binary score indicating whether the sentences in the pair can be considered a translation of each other. After initial tests, we choose Gradient Boosting as the classifier for our final submission. Gradient boosting produces a prediction model in the form of an ensemble of weak prediction models, tipically decision trees. Similar to other boosting methods, it builds the models in a stage-wise fashion and it generalises them by allowing optimisation of an arbitrary differentiable loss function.

An important nuance of this task is how to obtain suitable examples to train the classification model. In the context of our task, positive examples are pairs of original and translated sentences, whereas negative examples are sentence pairs that cannot be considered translations of each other. Positive examples can be easily obtained from clean parallel corpora, and, while there is no explicit corpus with negative examples, these can be generated on demand perturbing one or both of the sentences in a pair to create a new synthetic pair that by construction constitutes a negative example. We apply three different perturbation operations:

-

Swap: exchange source and target sentences.

-

Copy: two copies of the same string. We apply it to both source and target strings.

-

Randomisation: replace the source or target sentence by a random sentence from the same side of the corpus.

As can be seen from above, we focus on the perturbation operations that mess with the correct alignment between the sentences. Thus, we aim at identifying correctly aligned sentence pairs. A complementary approach would be to aim at detecting how valuable a sentence pair is when used for training MT systems. However, this

is left for future developments.

The article submitted containing all the technical details is publicly available (link). An informal presentation was made during a poster session where the different participants demonstrated their approaches.

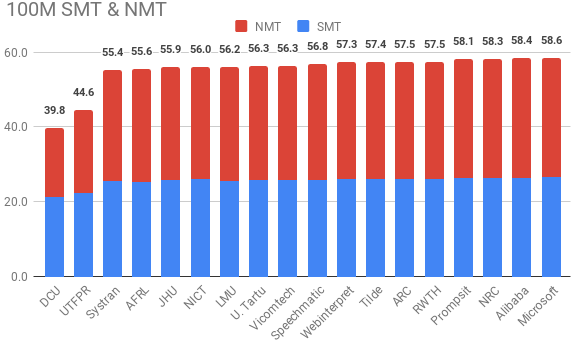

Webinterpret scored among the top third of participants, only a few points behind the best performing systems. The next chart displays the best submission of each participant stacking BLEU results for statistical (blue) and neural (red) machine translation.

We obtained a score of 26.1% for statistical (about 99% of the best submission: 26.5%), and 31.2% for neural (about 97% of the best: 32.1%). Compared to our submission, the top-scoring solution follows the same idea of having features describing each sentence pair but it focuses on cross-entropy minimisation to make the final assessment on the pair. Detailed results of the results of the task can be found here.